Advancing sustainability

Conserving marine life for a better future

Abandoned oil platforms can be home to some of Earth’s most robust ecosystems, proving life always finds a way. Blue Latitudes, a women-owned marine-environmental consulting firm, specializes in converting offshore platforms into artificial reefs. Their mission is to conserve and protect oceans by merging industry with the environment.

Blue Latitudes believes we can make a difference with the right resources. They use PowerPoint to tell a story, allowing their audience to gain new insights. What will our oceans look like in 20, 30, 40 years? With the right tools, we can help change the environment for the better.

Celebrating diversity & inclusion

-

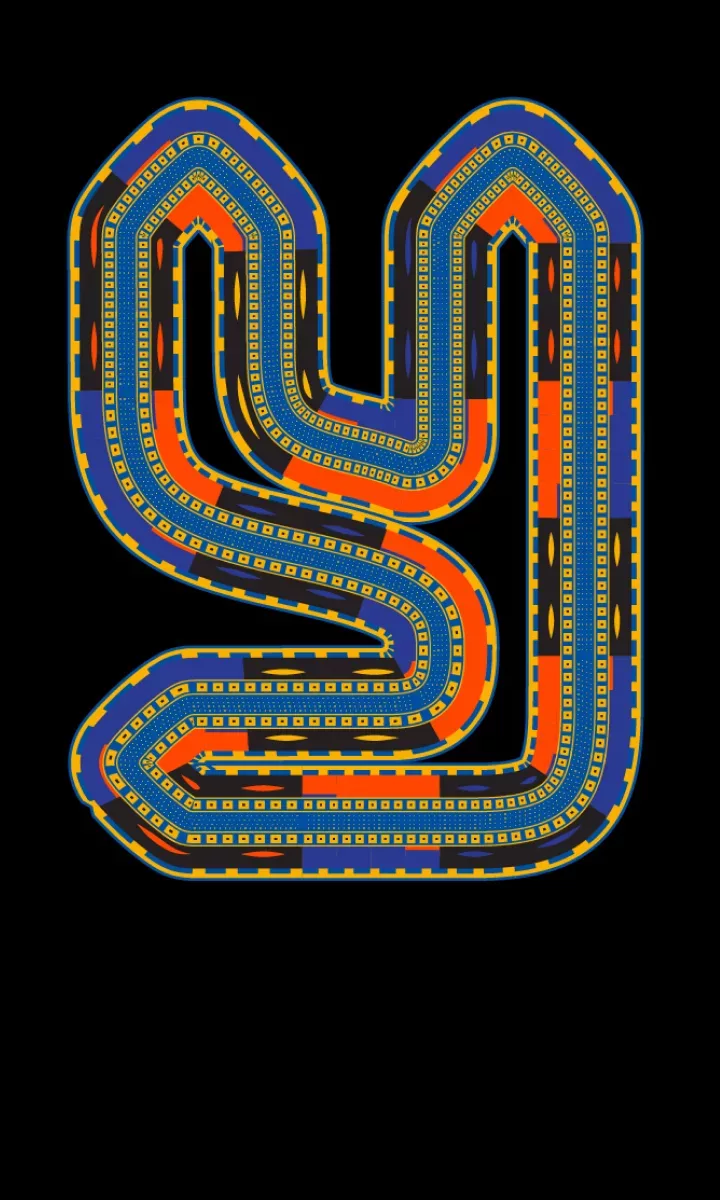

Can an alphabet save a culture?

Access for AllA new alphabet for an ancient people promises to preserve their culture and connect their community.

-

The “future” of work is neurodiverse

Access for AllMentra uses AI to connect neurodiverse job-seekers with their next, great career opportunity

-

AI-powered coding for everyone

Access for AllEngineers like Anton Mirhorodchenko are tackling accessibility issues in unprecedented ways.

Trending on Unlocked

-

-

-

Sealed in glass

Out ThereProject Silica’s coaster-size glass plates can store unaltered data for thousands of years, creating sustainable storage for the world

More stories

-

-

Helping people when they need it most

The ShiftTeam Rubicon deploys skilled veterans to help communities recover when disaster strikes

-

Gamifying breaks barriers

Changing the GameKC7’s gamified approach is reshaping how we think about education and inclusion in cybersecurity.